How AI Turned Everyone into a Consumer Reporting Agency

AI tools are valuable precisely because they sort, filter, and score — but that core capability can trigger regulations that sound unrelated to what you’re doing.

A new lawsuit claims AI hiring tools might violate the Fair Credit Reporting Act because their scoring systems could legally be “consumer reports” — even though you thought you were just screening applicants.

This isn’t just about hiring: anywhere you’re using AI to score or rank people, ask what regulations apply to that activity, not just to “AI.”

AI tools are valuable because they sort and filter. That’s the point. You give them a flood of data and they rank, score, surface what matters.

But that same capability can trigger regulations you didn’t expect.

You think you’re using an applicant tracking system. The law might say you’re operating a consumer reporting agency.

The Case

On January 20, 2026, a class action was filed against Eightfold AI, claiming its recruiting platform violates the Fair Credit Reporting Act (FCRA).

Eightfold’s AI generates “likelihood of success” scores for job applicants. Employers use these to rank candidates.

The plaintiffs’ claim: Those scores might legally be “consumer reports” under the FCRA. If so, Eightfold and its customers need to provide notice, get authorization, follow adverse action procedures, and offer dispute rights.

The lawsuit alleges Eightfold does none of this. It generates hidden scores without transparency.

The legal question: Does an AI-generated hiring score count as a consumer report?

2024 CFPB guidance says algorithmic scores used to evaluate people can qualify. If the plaintiffs are right, this isn’t just Eightfold’s problem — it’s every employer using AI to score applicants.

Statutory damages: $100–$1,000 per willful FCRA violation. In a class action, that adds up.

Case: Kistler v. Eightfold AI Inc., No. C26-00214, Contra Costa Superior Court (January 20, 2026)

The Pattern

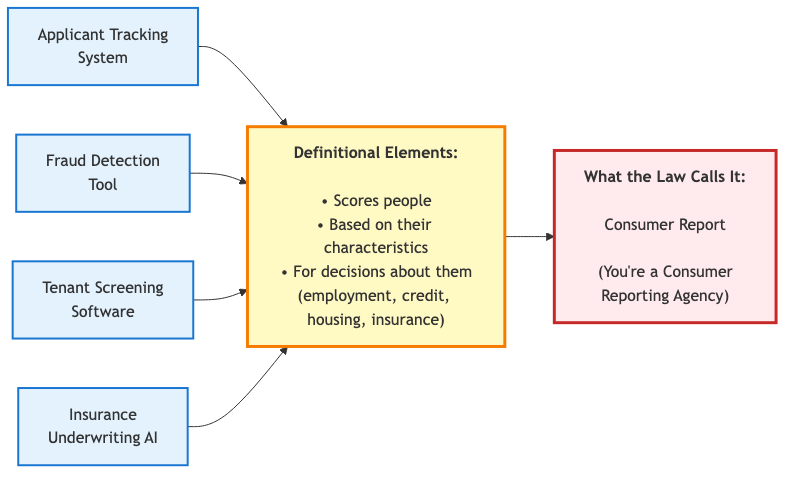

This isn’t just about hiring. AI tools that score people trigger regulations based on the activity, not the tool. Lots of things that may not feel like, for example, credit reporting, nonetheless meet the broad legal definition:

Lending: AI credit scores might trigger FCRA, ECOA, state lending laws

Tenant screening: Rental applicant scores trigger FCRA, Fair Housing Act

Insurance: Underwriting AI triggers state insurance regs, anti-discrimination laws

Fraud detection: Transaction scoring might trigger FCRA if used to deny services

The regulations were written decades ago. They didn’t anticipate AI. But they cover decision-making about people, and that’s what AI does.

What to Ask

Before deploying AI that scores or ranks people:

1. What decision is the AI informing?

Hiring, lending, tenant screening, insurance pricing, account access?

2. What regulations apply to that decision?

Not “What AI regulations apply” — but “What regulations apply to making hiring/lending/screening decisions?”

3. Does the AI generate scores that could be consumer reports?

If it evaluates people for employment, credit, or insurance, FCRA might apply.

The Point

AI’s core strength — sorting and filtering — is what triggers unexpected regulatory exposure.

The law cares what you’re deciding and what factors and data you are using to make the decisions. Sometimes it also cares that you’re using AI to decide it.

Ask what regulations apply to the decision process, not just to the tool.

Want to see what this looks like for your practice? Email me at adam@lawsnap.com or click here to schedule a short, no-pressure call.

Go Deeper

Scoring Applicants? Your AI Could Be in FCRA Territory - National Law Review

Can AI Applicant Screening Trigger FCRA Obligations? - JD Supra

Groundbreaking Lawsuit Tests Whether AI Hiring Tools Trigger FCRA Compliance - Ogletree Deakins

CFPB Circular 2024-06: Algorithmic Scores as Consumer Reports - CFPB

REGULATE AI RIGHT NOW!!!!!