How Your Business Can Harness ChatGPT While Keeping Clear of Privacy Penalties

Business are rushing to adopt artificial intelligence tools like ChatGPT -- but if they don’t design in privacy from the start, they risk facing lawsuits, fines and public backlash

Many businesses are exploring powerful AI tools such as ChatGPT for all kinds of applications, from (1) providing personalized product recommendations to (2) offering automated customer support (3) analyzing huge volumes of customer feedback;

Use of these AI tools raises unique, unprecedented privacy issues because these algorithms (1) are very powerful and opaque, and nobody quite knows how they work (2) they use vast amounts of data (3) they are upending the way we work and changing familiar routines and so they will force us to rethink almost everything about our business processes, including how we manage privacy;

Businesses that want to manage their risk must develop a comprehensive privacy strategy from the start and stay on top of rapid changes in (1) the underlying technology, (2) public perceptions, and (3) laws and regulations.

A Glimpse Into the Future . . .

I’ve just come back from looking into the LawSnap Crystal Ball™. Here are some of the top business news stories of 2025 about businesses around the world who got in trouble because they implemented artificial intelligence but forgot to think about protecting privacy rights.

Online Retailer Fined $5 Million for CCPA Violations After Sharing Customer Data with AI Provider

SAN MATEO, Calif. 2025 – In a landmark case, MadcapMarketplaceofMerryMerchandise.com (MMoMM), a California-based online retailer has agreed to pay a fine of $5 million after the California Attorney General's Office claimed violations of the California Consumer Privacy Act (CCPA). MMoMM was found to have shared its customers' personal information, including names, email addresses, and purchase history, with a third-party language model provider without obtaining proper consent.

European Software Company Faces Heavy GDPR Fines for Inadequate ChatBot Data Security Measures

BERLIN, 2025 – European software company Euromancer Enigma Systems (EES) has been hit with a €10 million fine by a European data protection authority for violating the General Data Protection Regulation (GDPR). EES employed a large language model, ChatGPT, to assist clients with technical inquiries through a chatbot. However, the company's weak security measures led to a data breach, compromising personal data of thousands of users.

Major Hospital Chain Settles HIPAA Violations Case for $5 Million After AI Chatbot Incident

CHICAGO, 2025 – National hospital chain Happy Health Helpers of Healing (HHHoH) has agreed to pay $5 million in a settlement with the U.S. Department of Health and Human Services (HHS) over violations of the Health Insurance Portability and Accountability Act (HIPAA). The case stemmed from HHHoH's use of a large language model, ChatGPT, to analyze patient records and provide insights on potential treatments without properly anonymizing the data.

Investigators found that HHHoH failed to remove personally identifiable information, including names, Social Security numbers, and medical conditions, before uploading the data to ChatGPT. As a result, protected health information (PHI) was exposed to potential misuse. In addition to the financial settlement, HealthFirst will be required to implement a comprehensive corrective action plan, including staff training and stricter data handling protocols, to prevent future HIPAA violations.

The AI Wave Has Only Just Started

The release of Artificial Intelligence tool ChatGPT just a few months ago (see our earlier coverage here) has kicked off worldwide interest in AI, and businesses all over are moving fast to explore ways to transform the way they operate and the way they work with their customers, by, for example:

Giving customers personalized product recommendations based on their browsing history, purchase history, and preferences;

Offering better customer support with smart chatbots that can answer frequently asked questions, resolve simple issues, and make smart decisions about when to escalate complex problems to human representatives;

Analyzing vast amounts of customer feedback and reviews to improve product development, marketing, and customer service;

Automating repetitive and time-consuming tasks such as data entry, invoicing, and inventory management to increase efficiency, reduce mistakes, and cut costs;

Optimizing supply chain operations by predicting demand, managing inventory, and streamlining logistics;

Personalizing digital advertising by analyzing user behavior and preferences to deliver relevant and engaging content; and

Improving cybersecurity by detecting and preventing fraudulent activities, malware attacks, and other security threats.

With Great Power Comes Great Responsibility – And We Need to Build In Privacy Protection From the Start

What all the above examples have in common is that they are using data about customers – their preferences, their feedback, their purchase history, and their behavior to make business more powerful and more responsive. That is all (or mostly all …) to the good.

At the same time, this means that:

Businesses are collecting more data than ever before, and

Feeding that data into powerful algorithms, developed by third-parties, that no one, including the developers of the algorithms, totally understands.

So, while these algorithms are empowering businesses, it’s crucial that those businesses also empower their customers.

How Current Regulations Do (And Don’t) Apply to Artificial Intelligence.

The landscape of data privacy regulations around the world is bewilderingly complex. But to give you a sense of the variety of regulations out there and their global reach, we've compiled a list of some key privacy regulations from around the world. They include:

The European General Data Protection Regulation (GDPR);

The California Consumer Privacy Act (CCPA);

The U.S. Health Information Portability and Accountability Act (HIPAA)

The U.K.: the Data Protection Act (UK GDPR);

The Canadian Personal Information Protection and Electronic Documents Act (PIPEDA); and

The Australian Privacy Act 1988.

Each of these (and many other) privacy rules and regulations vary around the world – indeed, one of several challenges for modern businesses is staying on top of the regulations in different regions where they operate – but there are some general principles that apply almost everywhere:

A business should minimize the amount of information the business holds about an individual;

A business should be transparent about what information the business is collecting and what it is using the information for;

A business should take reasonable steps to ensure that data on an individual is accurate, and that an individual can correct information the businesses hold about them; and

A business should make sure there are adequate safeguards to protect individual information and – related – the business must notify individuals of any breaches that involve personal information.

These are, of course, only a starting point, and a business may face additional rules and requirements based on a specific region or industry. For example, one of the most important requirements is the European GDPR’s “right to erasure” (sometimes called the “right to be forgotten”), that is, the right to have personal data removed from an organization's records. (The analog in the UK, the UK GDPR, is similar in this regard).

New Challenges Applying Privacy Rules to AI Tools

As businesses explore implementing AI tools into their operations, it is crucial, not only to think through existing privacy rules but also to prepare for the unique complexities that AI will introduce. These new challenges include:

Lack of Transparency: These algorithms are complex, maybe among the most complex things that humans have ever built. Nobody, not even their creators, is quite sure what is going on under the hood. GPT-4 has 100 trillion parameters. We can’t go through them parameter by parameter, and nobody seems to know how to verify whether they are complying with all the relevant regulations.

Vast Amounts of Data: These algorithms need lots of data to function effectively, potentially including personal information about customers, and raising concerns about data privacy and security.

New Organizations and Workflows: The integration of AI tools like chatbots will likely upend the way organizations interact with customers and manage internal processes.

Complicated Interconnections: AI tools may interact with other systems, including third-party systems, in novel ways, increasing their vulnerability to hacking and introducing new security risks.

Consumer Misunderstandings: AI-powered interfaces can lead to misunderstandings with consumers who may be uncertain about how their data is being used and processed.

Consent and Data Usage Concerns: There is an ongoing debate about whether the use of publicly available data for training AI models, such as ChatGPT, violates regulations like GDPR, since millions of people never explicitly consented to have their personal data used in this manner.

Political Reality: Much of the Public Mistrusts AI-Based Tools

In addition to the legal issues, businesses seeking to incorporate AI tools need to be aware of the political realities. If you are reading this, then, I hate to break it to you, but you are probably living in a “pro-AI bubble (if it makes you feel any better, I am in that bubble too).

Much of the public is not so enthusiastic about AI, and this matters for the regulators enforcing the rules and the politicians passing laws governing AI.

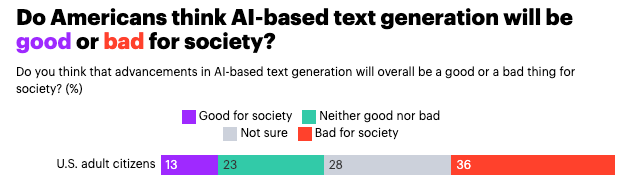

A recent poll by YouGov indicates that a majority of the public is unsure, or outright hostile. Overall, over one-third of U.S. adults think tools like ChatGPT are “bad for society” and only about one tenth say they are “good for society.

This skepticism on the part of the public is, in the short term anyway, going to lead to increased scrutiny from regulators, potentially hostile new laws from legislators, and wariness from the public.

Practical Pointers

The short version is the same message you’ve heard before, but more so: new AI tools can transform your business, but you need to focus on building trust and transparency from the start. Start with your privacy policy.

Make clear how your business is using AI: Explain how you are using AI tools and, more importantly, why you are using them, e.g., enhancing customer service, providing personalized recommendations, or analyzing user data for insights.

Make clear what data you are collecting: Explain in detail the types of personal information you are collecting, including any data shared with third-party AI providers. Explain the legal basis for processing this information, such as user consent or legitimate interest, and how long the data will be retained.

Take a hard look at how you are sharing and transferring data: If you are going to share any personal data with third-party AI providers, clearly outline the circumstances under which data sharing occurs, and the measures taken to protect user privacy. Address any cross-border data transfers, and ensure compliance with data protection regulations, such as GDPR or CCPA.

Explain how you are anonymizing data and what else you are doing to protect it: Describe the steps taken to anonymize or aggregate personal data before processing it through the AI tools, and how the company ensures that the data cannot be re-identified.

Inform people of their rights and choices: Inform users of their rights regarding personal data, such as the right to access, correct, delete, or restrict processing of their data. Note that these rights may be different for users in Europe, vs. the U.S. vs. U.K. vs. Canada vs. … you get it.

Give clear instructions for how users can exercise their rights: How can users exercise these rights? What do they do? Who do they contact? If, for example, they want to opt out of the processing of their information, make clear how they do that.

Describe your data security measures: Outline the data security measures implemented to protect personal information processed by AI tools, such as encryption, access controls, and regular security audits. Lay out your procedures for responding to data breaches and notifying affected users.

Describe your review processes: Mention any Data Protection Impact Assessments (DPIAs) or similar evaluations conducted to identify and mitigate risks associated with using AI tools for processing personal data.

Inform users about updates and revisions: Include a clause indicating that the privacy policy may be updated periodically to address new issues related to AI implementation or changes in data protection regulations. Inform users about how they will be notified of any significant changes to the privacy policy.

Include your contact information: Provide contact details for the company's data protection officer or privacy team, so users can ask questions or raise concerns about the use of AI tools and their impact on privacy.

Questions? What did I miss? What should I cover next? Please leave it all in the comments.

Thanks.